Background To The SPACE HUB

Immersive

With SPACE HUB, delivering immersive audio content to listeners can be flexible, and the Object-Based approach means you can tailor the content in the most natural and effective way.

In general, there are three ways of delivering immersive audio content to listeners: Channel-Based, Scene-Based and Object-Based formats.

Channel-Based corresponds to one specific speaker setup such as Stereo or 5.1, allowing you to mix and send audio to specific speakers within the setup. This is useful for consumer formats since it does not require a renderer on the receiver side. However, once the content is encoded for a specific setup, it is not possible to then change the setup for a different speaker configuration. It is also not possible to interact with or adjust the sound field when playing back Channel-Based content. This is the nature of this method of rendering audio.

Scene-Based audio aims to encode the sound field as a whole into discrete channels, by means of mathematical equations (think of the way the FFT divides a signal into its individual components in the frequency-domain). The most common approach to this is Ambisonics, which allows you to recreate a 3D audio space using multiple formats and different degrees of spatial resolution. Scene-Based content requires a renderer for playback, and allows live interaction, results can vary based on the exact positioning of loudspeakers and on the density of the setup as well as on the spatial resolution of the source material.

Object-Based audio allows you to visually pinpoint sound in three dimensions. A physical representation of the space to move these “objects” around the space in real time, for simple control and efficient management of the detail involved in the 3D space. Object-based mixing treats each mono or stereo input as an entity that consists of both an audio signal and corresponding metadata which can record details like the position in space, width, etc. This entity will be called an Object throughout this documentation. Object-Based audio is incredibly flexible and easy to start building the immersive experience. It makes no assumptions about the loudspeaker setup at the production stage.

This makes the content easily recordable and portable between different venues and from creation in a small studio setup to performance in a large auditorium. With SPACE HUB’s incredible flexibility, you have the ability to select the rendering Algorithm per object independently. This allows content creators to tune the way objects behave to fit the given venue, follow and complement performances, and most importantly, bring the artistic vision to life.

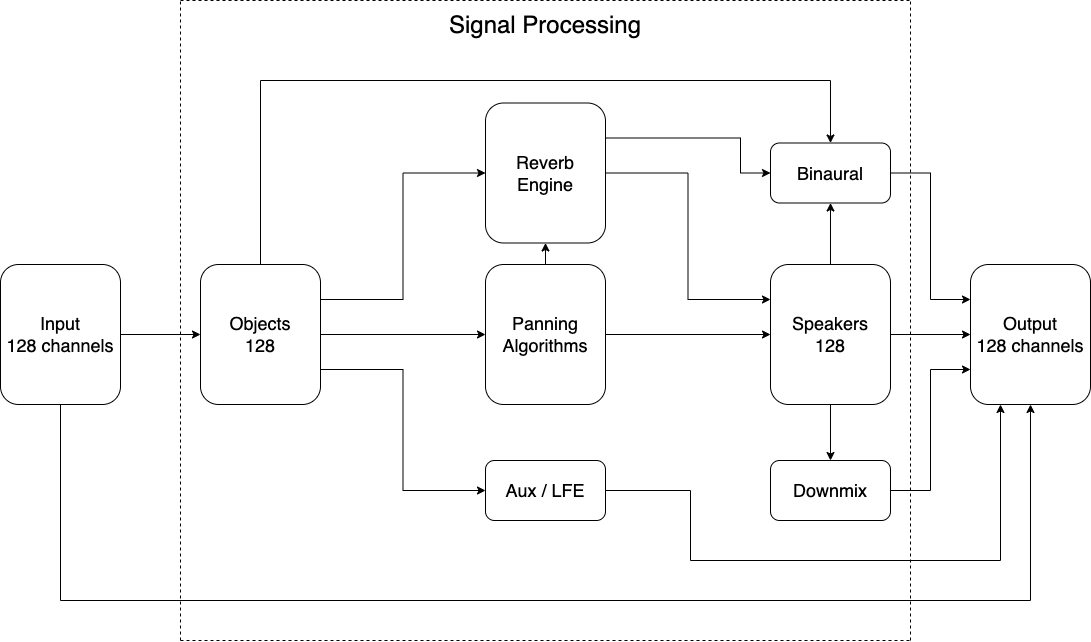

Signal Flow

Object Parameters

Azimuth

The horizontal angle of an Object around the listening position is called Azimuth. 0° places an Object straight ahead (centre stage). When moving clockwise, the Azimuth increases, meaning +90° is on the audience’s right-hand side and -90° on the left. For SPACE HUB, a Spherical coordinate system was chosen over a Cartesian since it allows an Engineer to scale the entire mix by only altering the Distance parameter without distorting Object movements.

Elevation

Add sound effects and swooshes of movement overhead. Elevation lets you control the z-axis, and the vertical angle of the Object in relation to the listening position. 0° places an Object at ear height, while +90° is located directly above the listener.

Distance

This parameter determines the Distance of the Object from the listener. The origin of this coordinate system can be adjusted by adding offsets to the layout on the Speakers page.

Spread

Spread provides a smooth and uniformly distributed movement within the acoustic space. The Spread parameter behaves differently depending on the Panning Algorithm.

LFE

The LFE Parameters act as Aux Sends to the LFE Aux Buses.

Stereo Width

Build a wider spread in two linked Objects. Stereo Width sets the Azimuth offset between linked Stereo Objects, effectively making the Stereo Base wider or smaller between these Objects. Linked Objects do not have to be used for traditional Stereo signals only. For example, a value of 180° could also be used to add an interesting effect that follows an Object on the opposite side of the room, for example using creative delay effects.

orbit

Enables the Orbit that has been selected for this Object

random

Enables a random Orbit that has been defined for this Object

Algorithms

DBAP

Distance-Based Amplitude Panning: SPACE HUB’s default algorithm DBAP distributes the signal across all speakers without making assumptions about a sweet spot. Levels are determined by distance from the object to the speakers. Placing the object in the middle of the panning area will send the same level to all speakers, while placing the object close to a speaker will result in a high level in that speaker and low level in the remaining ones. As a result, moving the object closer to the speakers will result in a more focussed signal. DBAP scales very well to larger or smaller Speaker setups. This algorithm also works well on irregular or asymmetric layouts.

VBAP

Vector Base Amplitude Panning uses the three loudspeakers closest to the Object and pans the signal between them. Vector Base Algorithms only consider Azimuth and Elevation of an Object, not the Distance. The Spread parameter adds an increasing number of virtual Objects in order to activate more than three speakers. These Algorithms work best on denser, more regularly spaced speaker layouts, on sparse setups with large angles between speakers the Object can appear to jump when Spread is set to a low value.

VBIP

Vector Base Intensity Panning builds on the same principles as VBAP, but was optimised for high-frequency content (>700Hz). The selection of which speakers to use to render an object is identical to VBAP, only the gain calculations differ.

LBVBAP

Layer-Based VBAP consists of multiple horizontal layers of 2D VBAP stacked on top of each other. Inside each layer of speakers, the algorithm selects the pair closest to the object and pans between these two. For the vertical panning, the signal is sent to the two adjacent layers with a mix calculated from the object elevation.

LBVBIP

Layer-Based VBIP works identically to LBVBAP, but the gain calculations are optimised for high-frequency content (>700Hz).

Reverb

The SPACE HUB includes an advanced 3D Reverb Engine that takes into account both Speaker and Object locations and offers flexible control over three different parts of the Reverb Tail: Early & Cluster reflections as well as the Late diffuse part.